- Apr 7

Is AI going to make doctors redundant?

- Snehith G (edited by Arella E)

- Medicine, Technology, Artificial Intelligence

- 0 comments

Imagine this. At 3 am, a patient arrives in the emergency department with chest pain. A machine learning algorithm instantly analyses the ECG, compares it with thousands of previous cases, and produces a probability score for myocardial infarction. The patient’s fine it reassures you. But you, as the only physician on site, realise that whilst the ECG seems normal when compared with the millions of ECG’s the AI has been trained against, this ECG shows a slight ST depression rather than the usual ST elevation. It could be a posterior myocardial infarction. [1] A hidden heart attack. “I could have sent home a patient who has just had a heart attack,” you remind yourself.

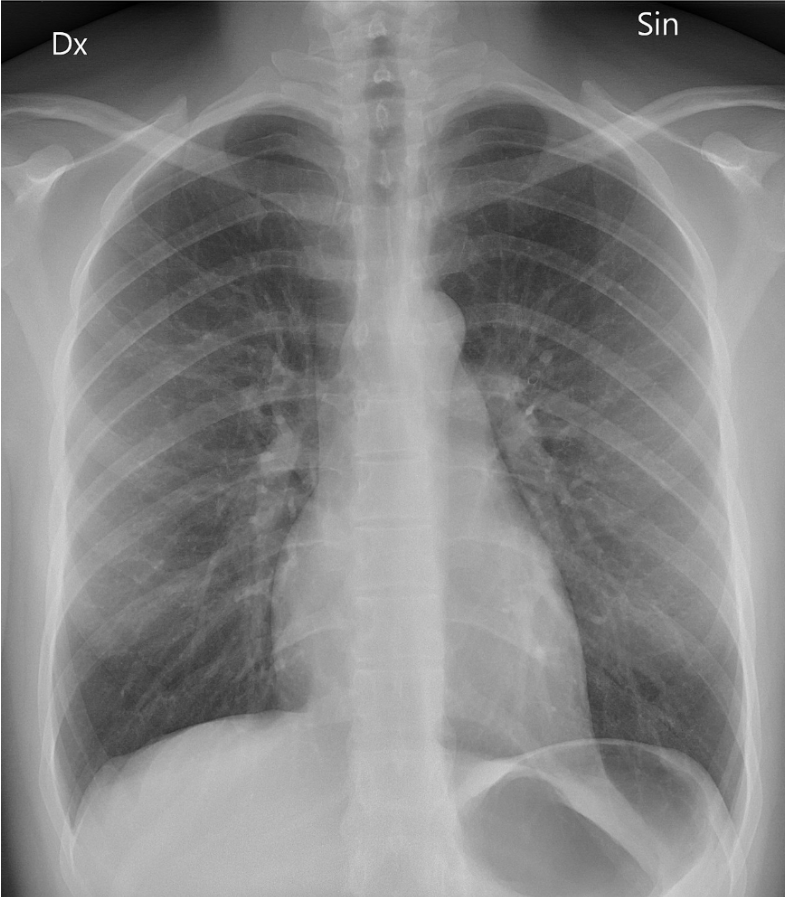

Figure 1

As part of the NHS’ 10 year health plan, the use of digital technology (including AI) is becoming increasingly prominent in many parts of healthcare.[2] However, medicine has historically evolved alongside technology. The invention of X-rays in 1895 transformed diagnosis, yet radiologists did not disappear for the simple reason that understanding what figure 1 actually means still requires years of training and experience. [3] MRI scanners, genomic sequencing, and robotic surgery have similarly changed how doctors work without eliminating the need for clinicians.

Artificial intelligence represents the latest and perhaps most powerful transformation. Modern machine learning systems can analyse vast quantities of medical data - radiology scans, genetic sequences, laboratory results, and patient records - far faster than any human. In controlled experiments, some AI systems now achieve diagnostic accuracy comparable to physicians. [4]

Therefore, this poses the question: Will AI make doctors redundant or will it reform the way medicine is practiced?

AI in clinical imaging and diagnostics

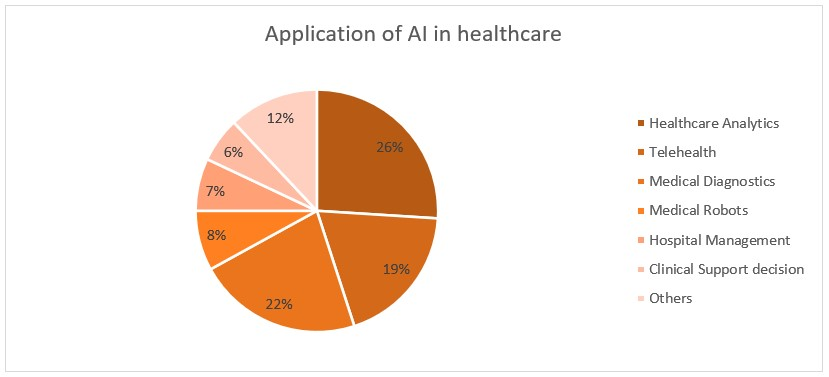

Undoubtedly, one of AI’s biggest strengths is clinical imaging and diagnostics and is unsurprisingly where we see AI being used the most (see figure 2). [5] This is because artificial intelligence performs exceptionally well at analysing large datasets. Deep learning algorithms can identify subtle patterns in medical images that may be difficult for the human eye to detect.

Figure 2

For example, neural networks trained on thousands of radiology scans can detect lung nodules or tumours with high accuracy. A large meta-analysis comparing AI systems with physicians found no significant difference in overall diagnostic accuracy, although human specialists still performed better in complex cases. [6]

One successful real-world example is IDx-DR, an AI system approved in 2018 to screen for diabetic retinopathy using retinal photographs. [7] In primary care clinics, the algorithm can identify patients who need referral to an ophthalmologist, allowing faster screening and earlier treatment. So does this mean that primary clinicians are being “replaced” in these situations?

Not necessarily. The main issue is that these systems can only work effectively when the task at hand shows patterns and is clearly defined. Do they always show patterns? Not always. Medicine rarely deals with such clean problems. Patients often arrive with incomplete histories, multiple diseases, and symptoms that do not fit a textbook description. It also fails to completely take into account that all human bodies are different. No matter how much data you feed AI, it will always be limited by the fact that it doesn’t really understand what it’s looking at, and is simply trying to find patterns to predict what the most likely result or diagnosis is. Moreover, tasks aren’t always clearly defined either. Those subtle cues given by a patient such as body language or hesitation in the patient’s voice can be difficult for the AI to interpret. This is known as “context blindness” and is the reason why most AI systems in medicine function as decision-support tools rather than autonomous clinicians. [8]

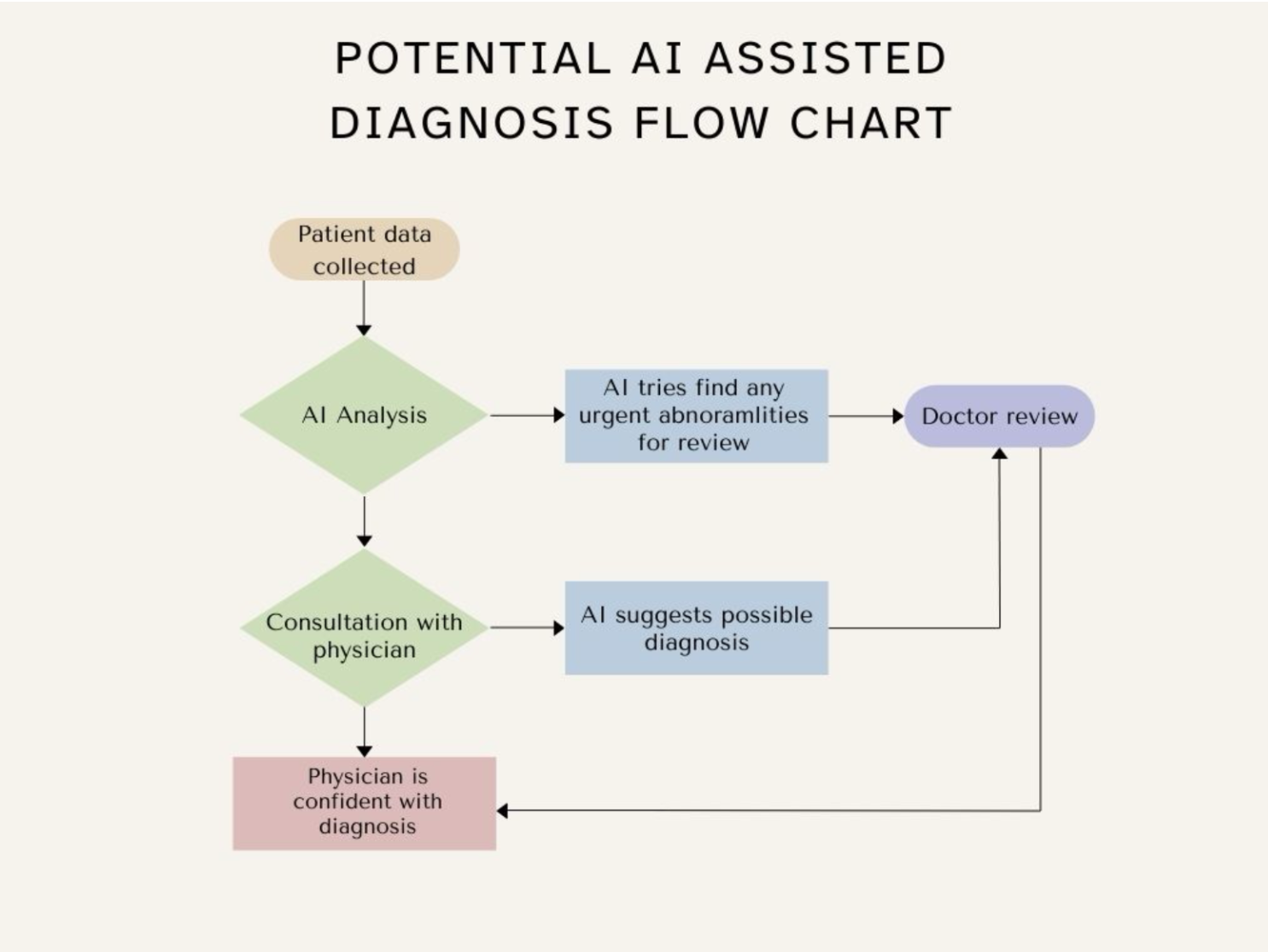

A potential flow chart I’ve created illustrates how AI could primarily be used as a triage and flagging tool rather than an autonomous decision-maker. By rapidly analysing patient data - such as ECGs, imaging scans, or laboratory results - AI systems can find patterns associated with disease and flag potential abnormalities for urgent review. (see figure 3). This allows clinicians to prioritise high-risk cases more quickly, reducing delays between data collection and human processing. The doctor then interprets the flagged findings within the broader clinical context before making the final diagnosis and treatment decision. In this way AI acts like the missing jigsaw piece in the puzzle, serving as an additional tool at a doctor’s disposal to further improve patient outcomes.

Figure 3 (note: abnormalities*)

Artificial intelligence is not human.

A central limitation of artificial intelligence in medicine is the absence of clinical intuition. [9]

Experienced doctors often recognise patterns not through explicit calculation but through accumulated experience. A patient may appear “slightly unwell” with a sore throat or an aching in the neck in a way that is difficult to quantify but signals that something serious may be developing to a doctor.

This form of knowledge - sometimes described as tacit knowledge - is difficult to encode in algorithms. It develops through years of clinical exposure and interaction with patients.

Doctors also rely on embodied information gathered during physical examination. For example, only by feeling a swollen joint or by hearing the characteristic “whoosh” of a heart murmur may lead to an accurate diagnosis. This means AI misses out on crucial information as there is currently no way for them to capture specific results from these examinations. [10]

Equally important is the human relationship between doctor and patient. Healthcare is not purely biological; it requires a holistic approach to care. For example, a patient with osteoporosis may take strong painkillers that allow them to stay mobile and independent, but the medication causes them to experience severe nausea. An AI system analysing clinical guidelines might recommend switching to a different drug to remove the side effect. However, a doctor who understands that maintaining independence is the patient’s priority may instead decide to keep the effective pain relief and treat the nausea separately. This kind of decision depends on interpreting personal values as well as medical data, something AI systems struggle to do.

Ultimately, only a physician capable of clinical judgement can help patients navigate difficult decisions, such as whether to pursue aggressive treatment or focus on quality of life. These choices require empathy, ethical reasoning, and an understanding of individual circumstances. [11]

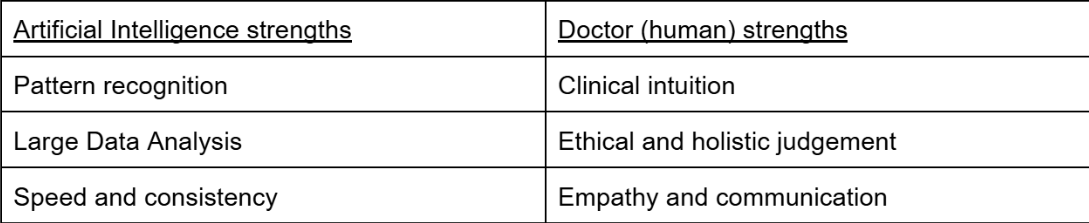

For this reason, even highly advanced diagnostic algorithms are unlikely to replace the broader role of physicians. Table 1 shows the potential strengths of both AI and physicians:

What’s important to realise is that their strengths don’t often overlap. This makes them excellent in complementing each other to improve the quality of care provided to patients, rather than one completely replacing the other.

The ethical issues related to the use of AI

AI in healthcare also raises clear ethical concerns which may limit where it can be used, meaning certain decisions may need to remain the responsibility of doctors.

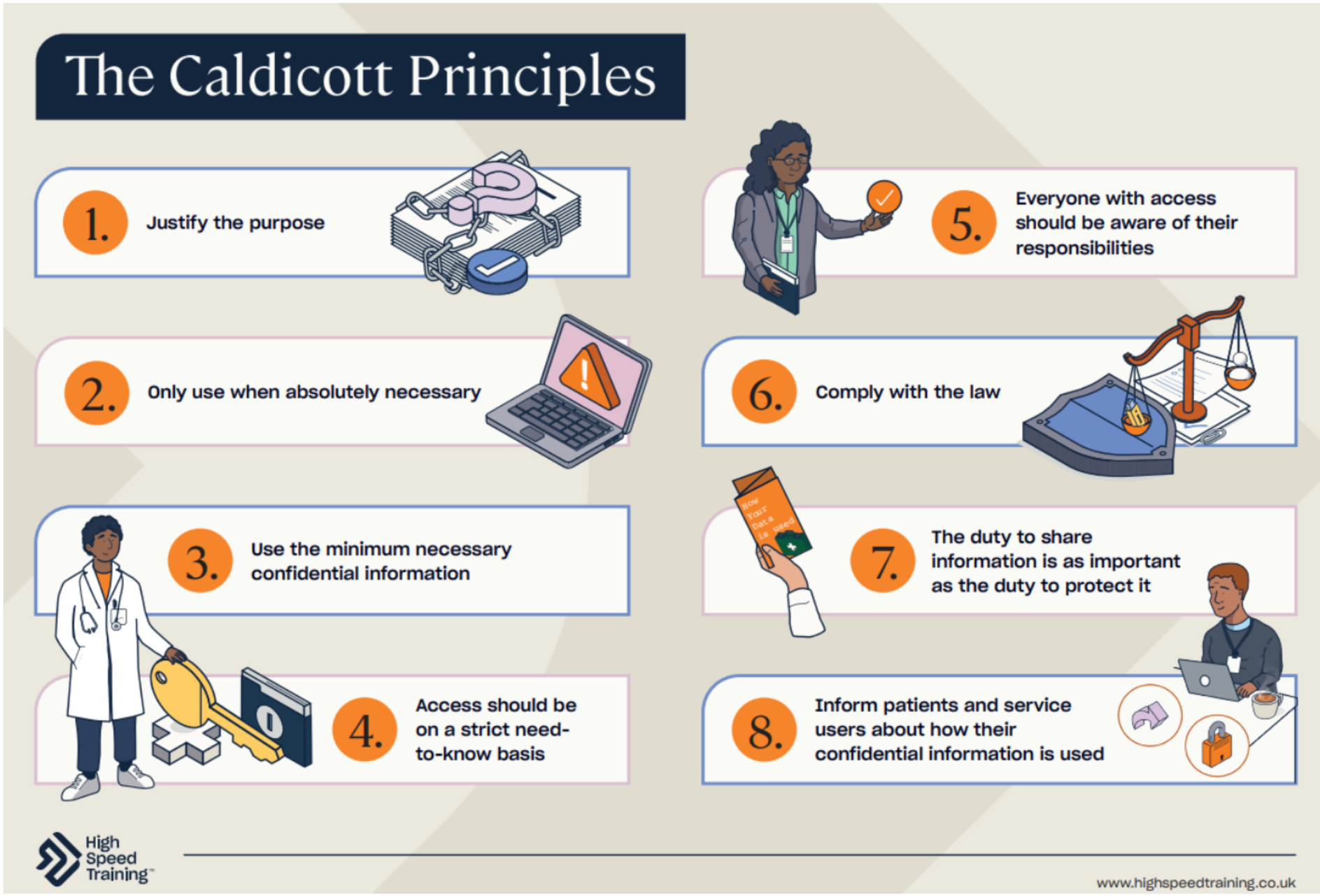

The Caldicott Principles guide safe handling of health data (see figure 4). They stress that patient information should only be used for legitimate purposes on a strict need-to-know basis. [12] However, it’s difficult for AI to follow these principles of confidentiality and privacy when it requires immense amounts of patient data to be trained upon without being able to receive consent from most of them. Misuse of this information can harm patients and potentially breach trust.

Figure 4

Responsibility is also another challenge. If an AI system contributes to a misdiagnosis, it is unclear who is accountable. The developer of the AI? The hospital who decided to implement the system? The clinician who used the AI? Human oversight is therefore essential so patients aren’t left to wonder what the full reason for their misdiagnosis is. In addition, ultimately, clinicians currently and will likely continue to remain legally and morally accountable for decisions that affect patients, something AI struggles at due the uncertainty in responsibility behind its decisions. [13]

Conclusion

Artificial intelligence will likely play a major role in transforming healthcare, particularly in diagnostics, imaging, and data processing, among other areas. It is an important tool for assisting clinical decisions since it can analyse huge amounts of medical data swiftly and see patterns. But the discussed examples show how much more is needed beyond just pattern recognition. Physicians must interpret complicated and uncertain situations, synthesize information from multiple sources, and take into account the individual values and circumstances of particular patients.

Hence, instead of making doctors redundant, AI will rather reshape the role of physicians by working alongside them. Artificial Intelligence systems will prove to be powerful decision-support tools which will enhance efficiencies and help clinicians in the earlier identification of risks, while doctors/physicians continue to provide the clinical judgement, ethical considerations and human interaction. Therefore, the future of health care is not AI replacing doctors. It is a collaborative effort between humans and artificial intelligence to achieve better patient outcomes.

Bibliography

[1] Buttner R. Posterior Myocardial Infarction. 2024 Oct 23; Available from: https://litfl.com/posterior-myocardial-infarction-ecg-library/

[2] Alderwick H. Government’s 10 year plan for the NHS in England. BMJ. 2025 Jul 4;390:r1396

[3] Hussain S. Modern Diagnostic Imaging Technique Applications and Risk Factors in the Medical Field: A Review. BioMed Research International [Internet]. 2022 Jun 6;2022(5164970):1–19. Available from: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC9192206/

[4] Miranda J, Pereira-Silva R, Guichard J, Meneses J, Carreira AN, Seixas D. Artificial Intelligence Outperforms Physicians in General Medical Knowledge, Except in the Paediatrics Domain: A Cross-Sectional Study. Bioengineering [Internet]. 2025 Jun 14;12(6):653–3. Available from: https://www.mdpi.com/2306-5354/12/6/653

[5] IBEF. The Future of Artificial Intelligence in Healthcare: Transforming Patient Care [Internet]. India Brand Equity Foundation. IBEF; 2024. Available from: https://www.ibef.org/blogs/the-future-of-artificial-intelligence-in-healthcare-transforming-patient-care

[6] Takita H, Kabata D, Walston SL, Tatekawa H, Saito K, Tsujimoto Y, et al. A systematic review and meta-analysis of diagnostic performance comparison between generative AI and physicians. npj Digital Medicine [Internet]. 2025 Mar 22;8(1). Available from: https://www.nature.com/articles/s41746-025-01543-z

[7] Hunfeld E, Tayar A, Paul S, Poschkamp B, Großjohann R, Morawiec-Kisiel E, et al. Real-world performance of the AI diagnostic system IDx-DR in the diagnosis of diabetic retinopathy and its main confounders. Scientific Reports [Internet]. 2026 Jan 29;16(1). Available from: https://pmc.ncbi.nlm.nih.gov/articles/PMC12864748/

[8] Mohamed L. Interactive Context Refinement (ICR): Contextual Blindness in Generative AI Systems [Internet]. SSRN. 25AD. Available from: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5394151

[9] Pelaccia T, Forestier G, Wemmert C. Deconstructing the Diagnostic Reasoning of Human versus Artificial Intelligence. Canadian Medical Association Journal. 2019 Dec 1;191(48):E1332–5.

[10] Lees J, Risǿr T, Sweet L, Bearman M. Digital Technology in Physical Examination teaching: Clinical Educators’ Perspectives and Current Practices. Advances in Health Sciences Education. 2024 Dec 3;

[11] Sauerbrei A, Kerasidou A, Lucivero F, Hallowell N. The impact of artificial intelligence on the person-centred, doctor-patient relationship: some problems and solutions. BMC Medical Informatics and Decision Making [Internet]. 2023 Apr 20;23(1):73. Available from: https://bmcmedinformdecismak.biomedcentral.com/articles/10.1186/s12911-023-02162-y

[12] UK Government. The Caldicott Principles [Internet]. GOV.UK. 2020. Available from: https://www.gov.uk/government/publications/the-caldicott-principles

[13] Habli I, Lawton T, Porter Z. Artificial Intelligence in Health care: Accountability and Safety. Bulletin of the World Health Organization [Internet]. 2020 Feb 25;98(4):251–6. Available from: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7133468/

Image sources:

[1] https://en.wikipedia.org/wiki/Chest_radiograph

[3] Created in Canva by Snehith Gannu

[4] https://www.highspeedtraining.co.uk/hub/the-caldicott-principles/